Coming 2nd in a Kaggle Competition

How my brother and I came second in a Google Health + AI competition.

Very stoked to announce my brother Josh and I placed 2nd in Google’s recent MedGemma Impact Challenge Kaggle competition.

Our entry, Sunny: Private Skin Tracker, was awarded 2nd place out of ~900 entries.

The brief:

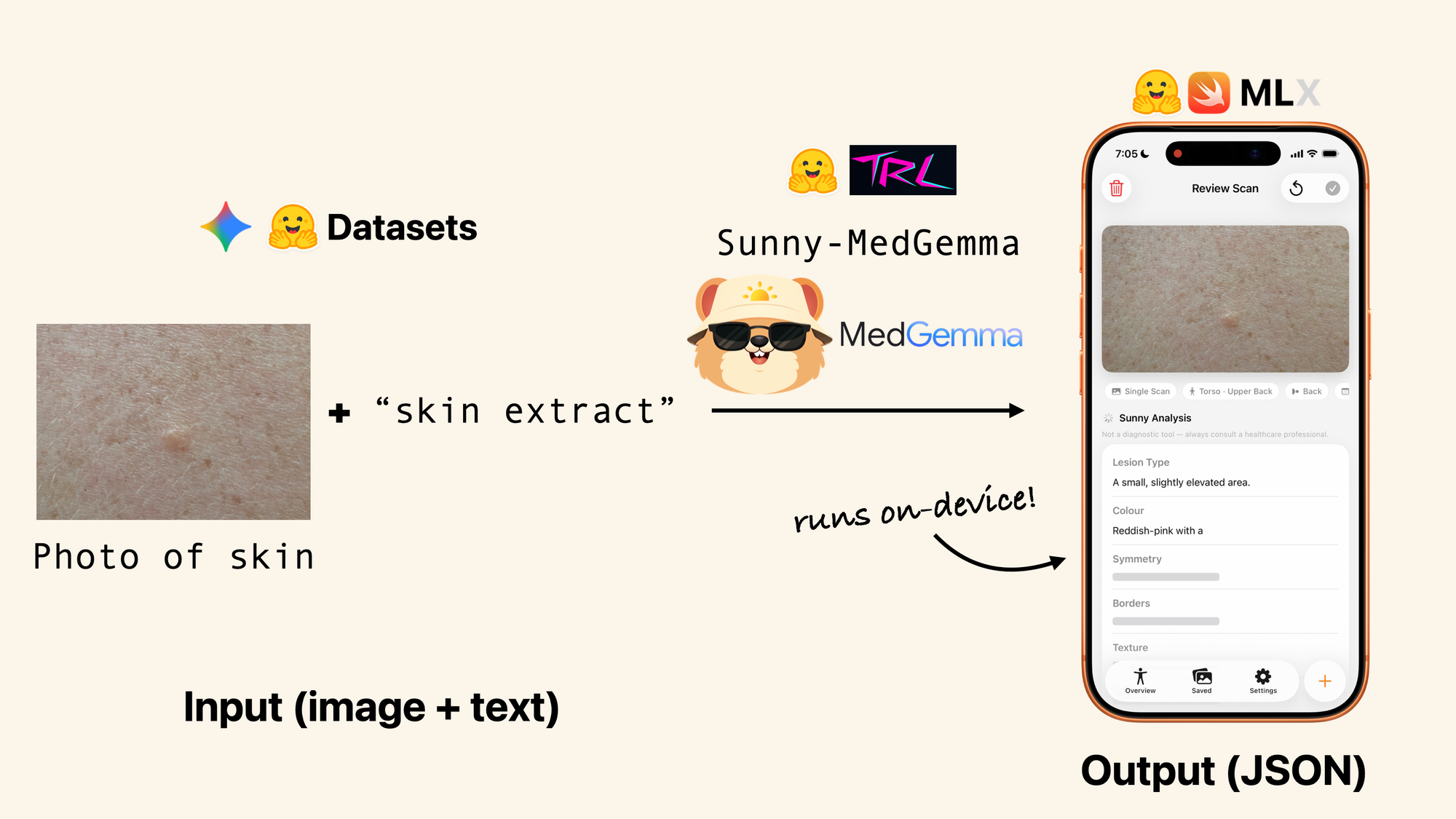

Use Google Research’s new open-source vision-language model MedGemma-1.5-4B (Med = Medical domain focused, Gemma = smaller version of Gemini) for a unique health-focused use case and provide communication materials (text and video) with it.

Sunny overview

Being in Australia, naturally, my brother and I turned to skin cancer.

Skin cancer is Australia’s most common and most expensive cancer to treat.

Later stage skin cancer can cost $100k+/year to treat (official details in the full writeup).

Whereas treating earlier stage skin cancer is not only much cheaper (usually under $1000) but if treated successfully, has a much higher survival rate over 5 years.

Australia already does a world class job of raising skin cancer awareness on the prevention side of things.

And our treatments are also state of the art.

However, there’s a lack of a national screening program.

That’s where Sunny comes in.

Sunny is an iOS application which runs a fine-tuned version of MedGemma locally, directly on the phone to extract visual details of a skin spot.

All processing happens directly on the device so everything stays private.

When you’re ready, you can export a structured report to discuss with your doctor.

Crucially, Sunny doesn’t diagnose whether a spot might be cancer or not, instead, it focuses on creating the habit of someone checking their own skin every 6-12 months.

This builds on the momentum of a large portion of skin cancers being discovered by patients themselves or their partners.

After all, who sees your skin more than you or your significant other?

Sunny’s goals are:

- Get more people doing regular, structured self-skin examinations.

- Catch more skin cancers in earlier stages resulting in earlier treatments and in turn, saving costs and potentially lives.

Productionizing Sunny, the potential road ahead

The submission we put forward for the competition is a working prototype to pitch the vision. For it to be deployed as a functional system, much more work would be required (this is noted in the full writeup).

If we were to push Sunny to realise it’s full potential, we would:

- Apply to Australian governing bodies for grant funds to continue development (we’d never want to charge for Sunny, more so make it available as a public tool for health). A notable feature of Sunny is once it is running on a person’s phone, it has no running costs, the app is fully contained and runs using the processing power of the person’s iPhone.

- Partner with doctors and dermatologists to make the data pipeline more rigorous.

- Partner with diverse groups of people to test the application more than we were able to ourselves (we only tested it our own skin).

- Talk with groups such as the Cancer Council to see how it would be best to promote such a tool.

Working on this project has given us a glimpse into the future of healthcare.

From our perspective, with recent AI advancements, we have the opportunity for everyone to have their own private medical assistant on their own personal device.

And even more so, we see plenty of opportunity to not use such technologies to provide conclusive diagnosis’ and replace certain services but to encourage people to empower and educate themselves with these technologies, providing incentives for future discussions with their doctor or other medical professionals.

In this light, we see our solution as psychological (developing a habit) as much as it is technical.

Resources

Sunny is 100% open-source. From data to models to application code. If we were to deploy it on a national scale, we’d intend to keep it this way for thorough third party investigation.

- Sunny code on GitHub

- Full Sunny writeup on Kaggle

- Official Google announcement blog post of the winners

- MedGemma Impact Challenge on Kaggle homepage

- Kaggle discussion announcing the winners

- Josh's talk on how to run language models locally on iOS devices

- My talk on the power of small language models (contains plenty of tidbits we learned throughout the Sunny project)

Credits

- Daniel Bourke did the MedGemma model fine-tuning, dataset construction, model conversion to be deployed on device, the technical writeup as well as submission video.

- Joshua Bourke created the iOS application of Sunny, including Sunny-MedGemma model integration and interface design.

- Grace Lee designed the Sunny logo and character.

A big thank you to Google for open-sourcing models like MedGemma as well as hosting such a fun competition.

Here’s to the next one!

If you have any questions about Sunny or would like to chat about Medical/Health AI in general, please reach out.